SC-NeuroCore¶

Universal Stochastic Computing Framework for Neuromorphic Hardware

SC-NeuroCore provides a complete stack for building, simulating, and deploying stochastic computing (SC) neural networks — from individual neurons to full SCPN layer hierarchies, with both software simulation and Verilog RTL for FPGA deployment.

Version 3.15.0 | 174 neuron models | optional Rust engine + Python front-end | HDL generation + hardware guides | PyPI | GitHub

v4.0 transition

Until v4.0, this repository intentionally keeps a broad kitchen-sink research surface in one checkout while the current experimental verification campaigns determine which runtime, compiler, hardware, bridge, and research paths are promoted. v4.0 is planned as the stable public API freeze and the point where the source tree is split into several focused repositories.

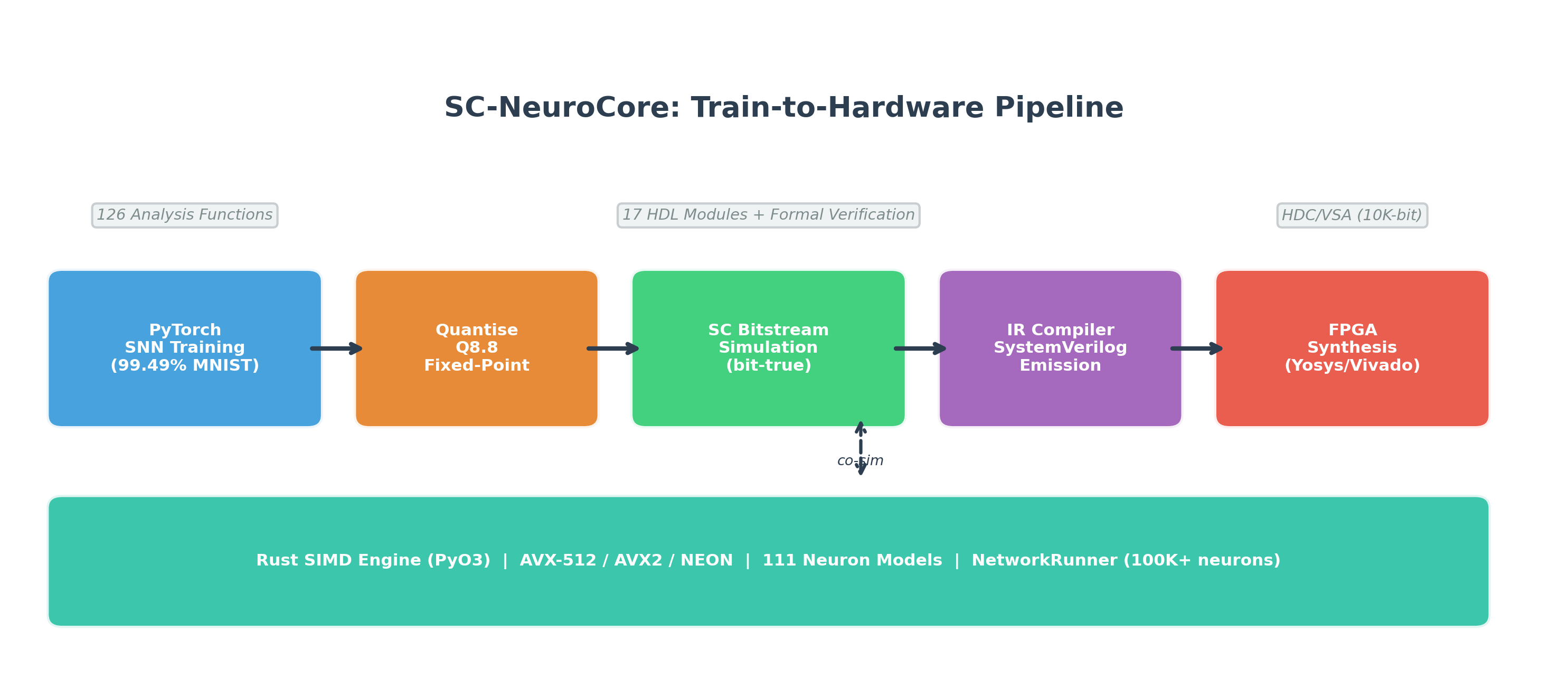

Train in PyTorch → Quantise to Q8.8 → Simulate with stochastic bitstreams → Compile to SystemVerilog → Synthesise for FPGA. The Rust SIMD engine accelerates all stages.

Train in PyTorch → Quantise to Q8.8 → Simulate with stochastic bitstreams → Compile to SystemVerilog → Synthesise for FPGA. The Rust SIMD engine accelerates all stages.

Key Features¶

- 174 neuron models — McCulloch-Pitts (1943) through ArcaneNeuron (2026), 9 hardware chip emulators, 9 AI-optimised

- 174 Rust neuron models — PyO3 bindings, 161-model NetworkRunner with Rayon parallelism

- ArcaneNeuron — primary self-referential cognition model with 3 coupled compartments (fast / working / deep) + attention gate + self-model predictor

- Identity substrate — persistent spiking network with checkpointing, trace encoding/decoding, L16 Director control

- Network simulation — Population-Projection-Network with 3 backends (Python, Rust, MPI)

- MPI distributed — billion-neuron scale via mpi4py

- Model zoo — 10 pre-built configs, 3 pre-trained weight sets (MNIST, SHD, DVS)

- 127-function analysis toolkit — spike train stats, distance, correlation, causality, decoding (23 modules)

- 14 visualization plots — raster, voltage, ISI, PSD, cross-correlogram, and more

- 13 advanced plasticity rules — pair/triplet/voltage STDP, BCM, BPTT, TBPTT, EWC, e-prop, R-STDP, MAML, homeostatic, STP, structural

- 7 biological circuits — gap junctions, tripartite synapse (astrocyte), Rall dendrite, cortical column, lateral inhibition, WTA, gamma oscillation

- Packed bitwise layers — 64-bit vectorised AND/MUX/XNOR/NOT/CORDIV for high throughput

- Rust SIMD engine — Rust-backed execution paths with SIMD dispatch and committed benchmark harnesses

- GPU acceleration — PyTorch CUDA + CuPy backend + JAX JIT training

- SNN training — 6 surrogate gradients, 12 differentiable neuron cells/nets (

nn.Module), SpikingNet + ConvSpikingNet,to_sc_weights()bridge to bitstreams - SCPN layer stack — 16-layer holonomic model (L1 Quantum → L16 Meta) with JAX acceleration

- Equation → Verilog compiler — arbitrary ODE string to synthesizable Q8.8 fixed-point RTL in one function call

- Verilog RTL — synthesis-oriented modules, formal-verification collateral, and targeted co-simulation/parity paths

- HDC/VSA — Hyper-dimensional computing for symbolic AI workloads

- NIR bridge — FPGA backend for NIR (18/18 primitives, recurrent edges, multi-port subgraphs)

- SC→quantum compiler — compile SC operations to quantum circuits, statevector + noisy simulation

- Predictive coding — zero-multiplication SC layer (XOR=error, popcount=magnitude)

- Topological observables — winding number, Ollivier-Ricci curvature, sheaf defect

- Phi* (IIT) — integrated information estimation for spiking networks

- Fault tolerance — SC vs fixed-point degradation benchmark, hardware-aware training

- SpikeInterface adapter — import experimental spike data (spike trains, sorting results)

- Adaptive bitstream length — Hoeffding/Chebyshev bounds for precision-speed tradeoff

- AXI-Stream + DMA — hardware interface modules (stream, DMA, parameterised registers, CDC)

- ANN-to-SNN conversion —

convert()turns trained PyTorch ANNs into rate-coded SNNs with QCFS activation - Learnable delays —

DelayLinearwith trainable per-synapse delays via differentiable interpolation - Deploy helper —

sc-neurocore deploy model.nir --target artix7scaffolds a project or FPGA flow invocation when the required toolchain is installed - Mixed-precision SC — per-layer adaptive bitstream length (Hoeffding/sensitivity-based)

- Event-driven FPGA — AER encoder, event neuron, spike router (power proportional to spike rate)

- Neural data compression — waveform and spike-raster codecs, learnable predictors, Rust acceleration, and benchmark artefacts

- conda-forge recipe — ready for conda-forge distribution

The default pip install sc-neurocore wheel ships the public

core/simulation/domain-bridge package surface under the sc-neurocore

product name. Extended source modules such as analysis, viz, audio,

dashboard, and swarm remain source-checkout features.

Quick Start¶

pip install sc-neurocore

When the optional Rust engine is available in the environment, SC-NeuroCore automatically uses it for NetworkRunner, E-I network simulation, batch model dispatch, and SIMD bitstream ops. Everything works without it: NumPy fallbacks are used. See Install Profiles for the base install, optional extras, and source-build path for acceleration.

from sc_neurocore import VectorizedSCLayer, BitstreamEncoder

layer = VectorizedSCLayer(n_inputs=8, n_neurons=4, length=1024)

output = layer.forward([0.3, 0.5, 0.7, 0.2, 0.8, 0.1, 0.6, 0.4])

print(output) # array of firing-rate probabilities

Architecture¶

| Tier | Modules | Ships in wheel |

|---|---|---|

| Core | neurons, synapses, layers, sources, utils, recorders, accel, compiler, hdl_gen, hardware | Yes |

| Simulation | hdc, solvers, transformers, learning, graphs, ensembles, export, pipeline, training | Yes |

| Domain bridges | quantum API guards, adapters/holonomic, scpn | API guards ship; Qiskit/PennyLane/JAX extras are research-grade opt-ins |

| Research | robotics, physics, bio, optics, chaos, sleep, interfaces | Source only |

| Frontier | analysis, viz, audio, dashboard, generative, world_model, swarm | Source only |

See Architecture for the full package map.

Tutorials¶

| Tutorial | Topic |

|---|---|

| SC Fundamentals | Bitstream encoding, arithmetic, noise analysis |

| Building Your First SNN | Neurons, synapses, layers, simulation |

| Surrogate Gradient Training | Train SNNs with backpropagation |

| Hyper-Dimensional Computing | Symbolic AI with high-dimensional vectors |

| FPGA in 20 Minutes | Train → quantise → synthesise → deploy |

| FPGA Deploy Cookbook | Five-minute scaffold, optional synthesis, report-to-optimiser handoff |

| Rust Engine & Performance | SIMD tiers, GPU, benchmarking |

| Brunel Network Translation | Brian2 → SC conversion workflow |

| Spike Codec Library | 6 codecs for BCI, probes, neuromorphic, real-time |

Documentation¶

- Getting Started — Installation and first steps

- Install Profiles — Base install, optional extras, and research-only polyglot boundary

- Alternative Paths — Safe opt-in workflow for baseline vs candidate implementations

- Stable Engine Bridge Contracts — Maintained wrapper modules for Rust engine consumers

- Acceleration Mirror Authority — Which Julia/Mojo acceleration files are authoritative today and which are mirrors only

- Neuron Integrator Paths — Explicit baseline vs higher-order integrator routes for selected neuron models

- Stochastic Source Emitters — Explicit standalone RTL emitters for LFSR-16 and Sobol-16

- Async AER HDL — Research-stage 4-phase AER wrapper around the stable synchronous HDL path

- Kuramoto Phase HDL — Research-stage fixed-point Kuramoto emitter for bounded synthesis experiments

- Surrogate Execution Paths — Explicit

custom_opvs legacy autograd surrogate routes for PyTorch training - Network To Torch Bridge — Explicit differentiable bridge from declarative

Networkgraphs to torch execution - JAX Surrogate Execution Paths — Explicit

custom_vjpvs legacystop_gradientroutes for JAX training - Equation Units — Opt-in strict dimensional validation for

EquationNeuronandfrom_equations(...) - SCPN NeuroCore Bridge API — Canonical

scpn_neurocorebridge artifacts and datastream packets for cross-repository SCPN workflows - API Reference — Python package API

- Rust Engine API — High-performance Rust engine docs

- Hardware Guide — FPGA deployment workflow

- FPGA Deploy Cookbook — Five-minute scaffold, optional synthesis, report-to-optimiser handoff

- Benchmarks — Performance measurements

- For Research Labs — Setup guide for neuroscience, hardware, and ML labs

- Pricing — Free for research, commercial licenses available

Demo¶

See the Neuron Explorer Notebook for an interactive walkthrough of all 174 neuron models with voltage traces, phase portraits, and F-I curves. The NIR Bridge Notebook demonstrates importing NIR graphs and simulating spiking networks. Or try the Quickstart on Google Colab — no installation required.

Community & Ecosystem¶

SC-NeuroCore integrates with the NIR (Neuromorphic Intermediate Representation) ecosystem, connecting to Norse, snnTorch, Lava-DL, and hardware targets including BrainScaleS-2, Loihi, and SpiNNaker2. SC-NeuroCore adds the missing FPGA deployment backend via bit-true Verilog co-simulation.

Contact: protoscience@anulum.li | GitHub Discussions | www.anulum.li

SC-NeuroCore is developed by ANULUM / Fortis Studio